Latest Articles

6 Tips on How to Market Your API

Rebuttal: API keys can do everything

Announcing Zuplo's API Monetization

How to Make A Rickdiculous Developer Experience For Your API

Increase revenue by improving your API quality

How To Make API Governance Easier

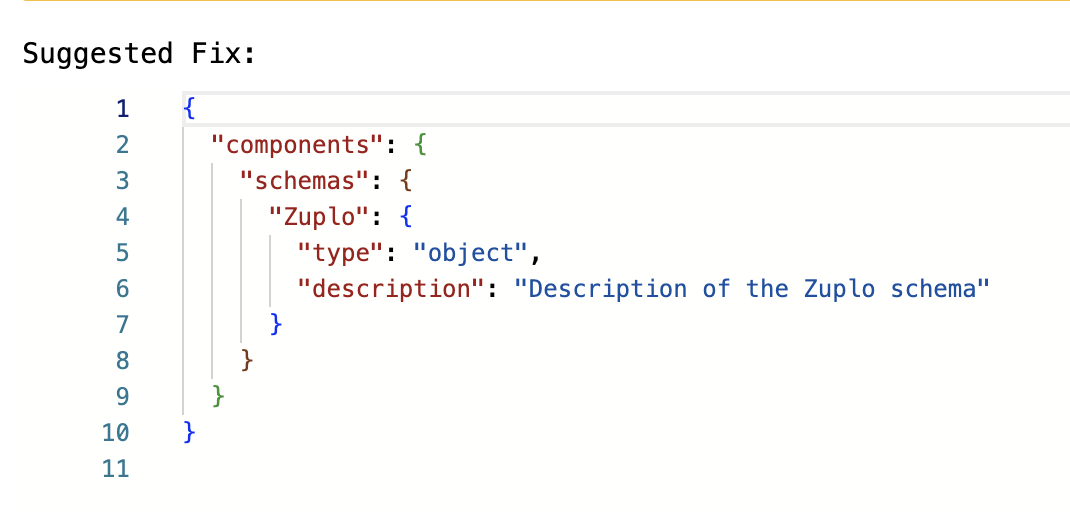

RateMyOpenAPI: now with AI suggestions for your OpenAPI!

Your API business needs to operate on the worldwide edge

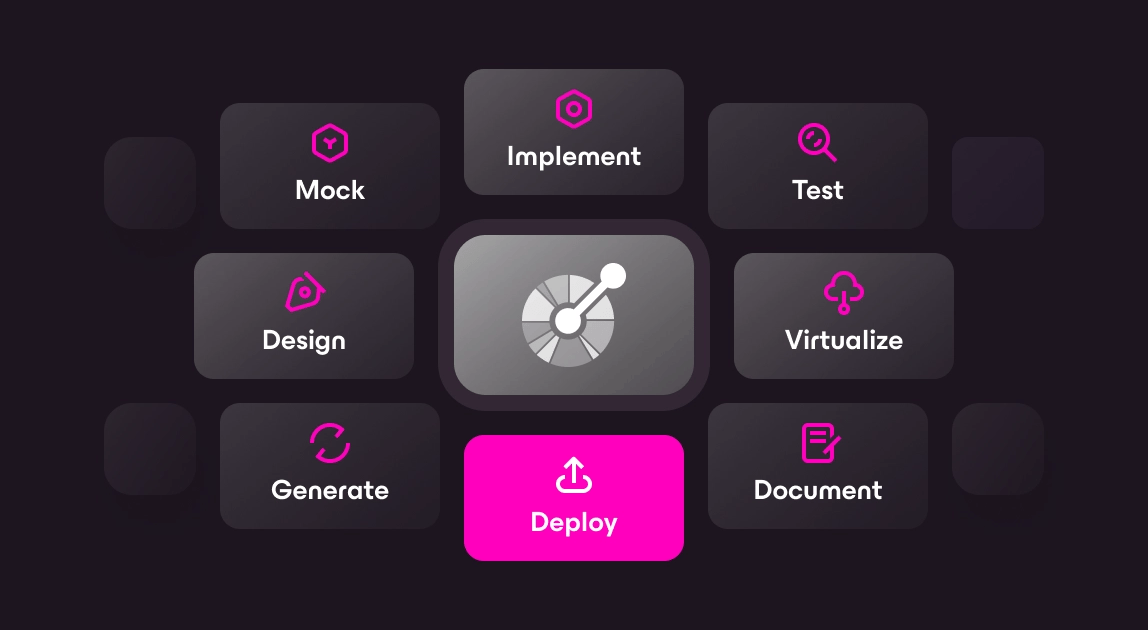

What's new on Zuplo?